+

+Permission is hereby granted, free of charge, to any person obtaining a copy

+of this software and associated documentation files (the "Software"), to deal

+in the Software without restriction, including without limitation the rights

+to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

+copies of the Software, and to permit persons to whom the Software is

+furnished to do so, subject to the following conditions:

+

+The above copyright notice and this permission notice shall be included in

+all copies or substantial portions of the Software.

+

+THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

+IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

+FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

+AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

+LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

+OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN

+THE SOFTWARE.

diff --git a/vendor/github.com/adrg/frontmatter/README.md b/vendor/github.com/adrg/frontmatter/README.md

new file mode 100644

index 0000000..1e3eb0f

--- /dev/null

+++ b/vendor/github.com/adrg/frontmatter/README.md

@@ -0,0 +1,170 @@

+

+

+

+

+

+Go library for detecting and decoding various content front matter formats.

+

+

+

+  +

+

+

+

+

+  +

+

+

+

+

+  +

+

+

+

+

+  +

+

+

+

+

+  +

+

+

+

+

+  +

+

+

+

+

+  +

+

+

+

+

+  +

+

+

+

+

+  +

+

+

+

+

+  +

+

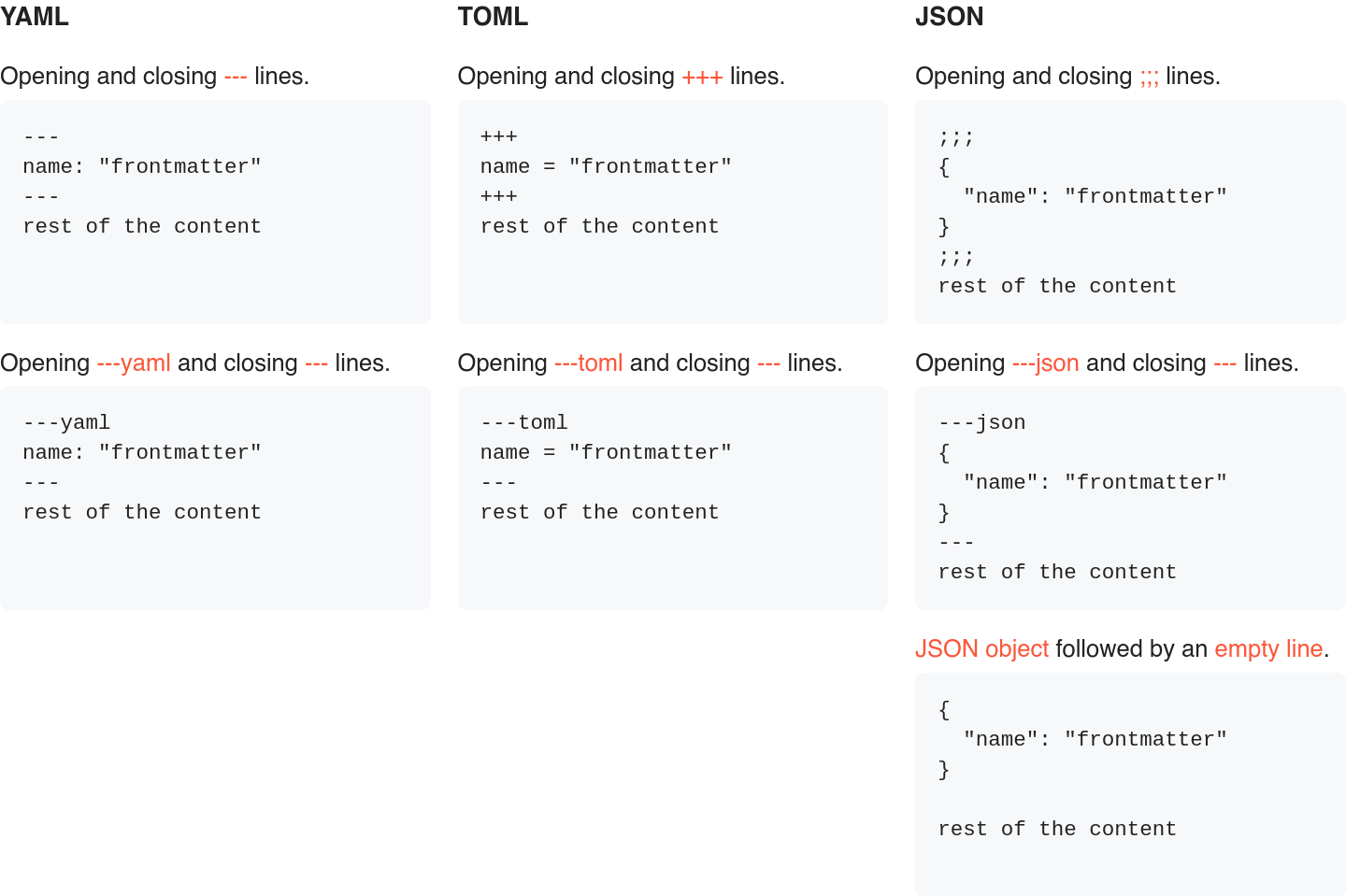

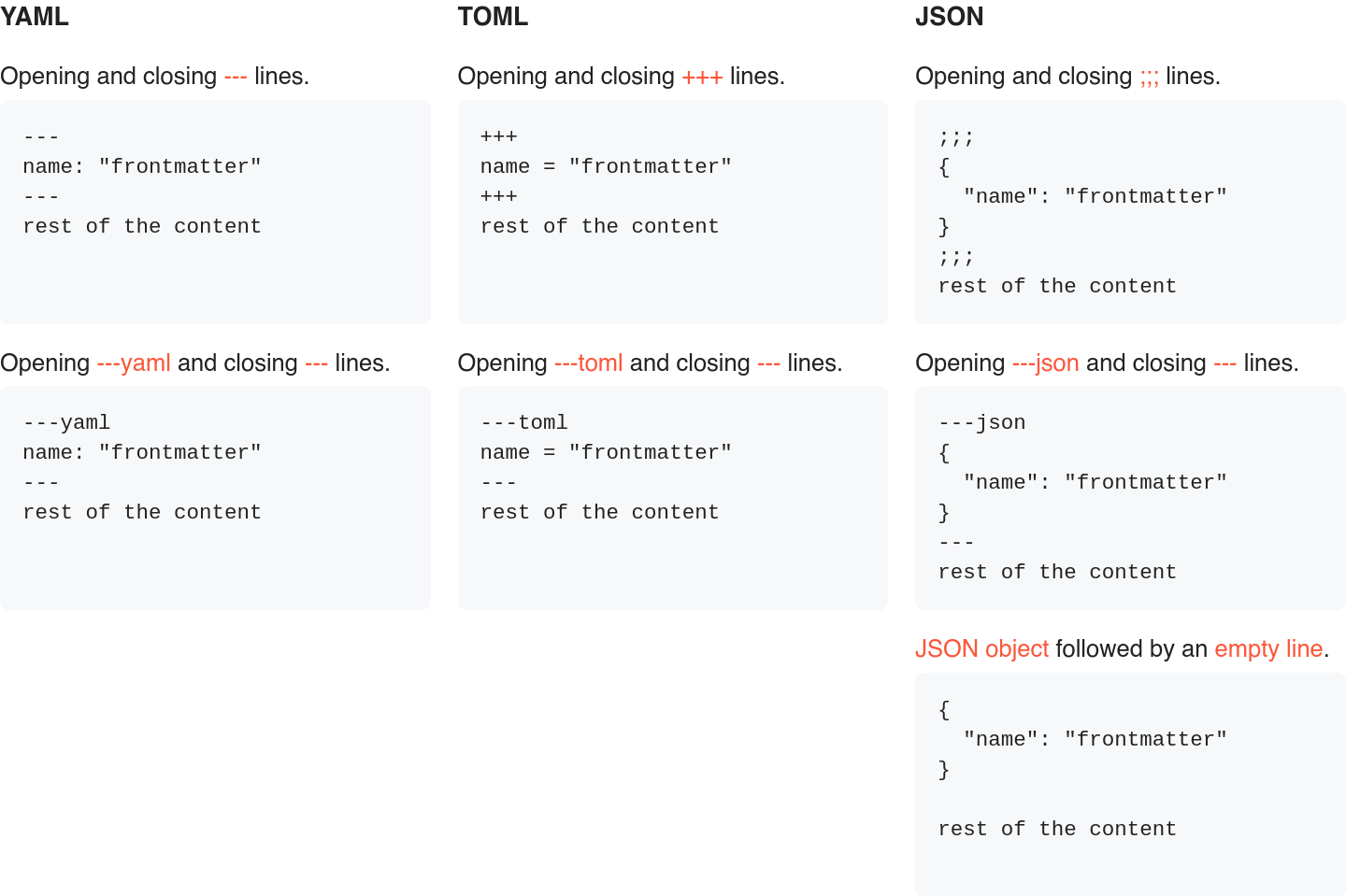

+## Supported formats

+

+The following front matter formats are supported by default. If the default

+formats are not suitable for your use case, you can easily bring your own.

+For more information, see the [usage examples](#usage) below.

+

+

+

+## Installation

+

+```bash

+go get github.com/adrg/frontmatter

+```

+

+## Usage

+

+**Default usage.**

+

+```go

+package main

+

+import (

+ "fmt"

+ "strings"

+

+ "github.com/adrg/frontmatter"

+)

+

+var input = `

+---

+name: "frontmatter"

+tags: ["go", "yaml", "json", "toml"]

+---

+rest of the content`

+

+func main() {

+ var matter struct {

+ Name string `yaml:"name"`

+ Tags []string `yaml:"tags"`

+ }

+

+ rest, err := frontmatter.Parse(strings.NewReader(input), &matter)

+ if err != nil {

+ // Treat error.

+ }

+ // NOTE: If a front matter must be present in the input data, use

+ // frontmatter.MustParse instead.

+

+ fmt.Printf("%+v\n", matter)

+ fmt.Println(string(rest))

+

+ // Output:

+ // {Name:frontmatter Tags:[go yaml json toml]}

+ // rest of the content

+}

+```

+

+**Bring your own formats.**

+

+```go

+package main

+

+import (

+ "fmt"

+ "strings"

+

+ "github.com/adrg/frontmatter"

+ "gopkg.in/yaml.v2"

+)

+

+var input = `

+...

+name: "frontmatter"

+tags: ["go", "yaml", "json", "toml"]

+...

+rest of the content`

+

+func main() {

+ var matter struct {

+ Name string `yaml:"name"`

+ Tags []string `yaml:"tags"`

+ }

+

+ formats := []*frontmatter.Format{

+ frontmatter.NewFormat("...", "...", yaml.Unmarshal),

+ }

+

+ rest, err := frontmatter.Parse(strings.NewReader(input), &matter, formats...)

+ if err != nil {

+ // Treat error.

+ }

+ // NOTE: If a front matter must be present in the input data, use

+ // frontmatter.MustParse instead.

+

+ fmt.Printf("%+v\n", matter)

+ fmt.Println(string(rest))

+

+ // Output:

+ // {Name:frontmatter Tags:[go yaml json toml]}

+ // rest of the content

+}

+```

+

+Full documentation can be found at: https://pkg.go.dev/github.com/adrg/frontmatter.

+

+## Stargazers over time

+

+[](https://starchart.cc/adrg/frontmatter)

+

+## Contributing

+

+Contributions in the form of pull requests, issues or just general feedback,

+are always welcome.

+See [CONTRIBUTING.MD](CONTRIBUTING.md).

+

+## Buy me a coffee

+

+If you found this project useful and want to support it, consider buying me a coffee.

+

+

+

+

+## Supported formats

+

+The following front matter formats are supported by default. If the default

+formats are not suitable for your use case, you can easily bring your own.

+For more information, see the [usage examples](#usage) below.

+

+

+

+## Installation

+

+```bash

+go get github.com/adrg/frontmatter

+```

+

+## Usage

+

+**Default usage.**

+

+```go

+package main

+

+import (

+ "fmt"

+ "strings"

+

+ "github.com/adrg/frontmatter"

+)

+

+var input = `

+---

+name: "frontmatter"

+tags: ["go", "yaml", "json", "toml"]

+---

+rest of the content`

+

+func main() {

+ var matter struct {

+ Name string `yaml:"name"`

+ Tags []string `yaml:"tags"`

+ }

+

+ rest, err := frontmatter.Parse(strings.NewReader(input), &matter)

+ if err != nil {

+ // Treat error.

+ }

+ // NOTE: If a front matter must be present in the input data, use

+ // frontmatter.MustParse instead.

+

+ fmt.Printf("%+v\n", matter)

+ fmt.Println(string(rest))

+

+ // Output:

+ // {Name:frontmatter Tags:[go yaml json toml]}

+ // rest of the content

+}

+```

+

+**Bring your own formats.**

+

+```go

+package main

+

+import (

+ "fmt"

+ "strings"

+

+ "github.com/adrg/frontmatter"

+ "gopkg.in/yaml.v2"

+)

+

+var input = `

+...

+name: "frontmatter"

+tags: ["go", "yaml", "json", "toml"]

+...

+rest of the content`

+

+func main() {

+ var matter struct {

+ Name string `yaml:"name"`

+ Tags []string `yaml:"tags"`

+ }

+

+ formats := []*frontmatter.Format{

+ frontmatter.NewFormat("...", "...", yaml.Unmarshal),

+ }

+

+ rest, err := frontmatter.Parse(strings.NewReader(input), &matter, formats...)

+ if err != nil {

+ // Treat error.

+ }

+ // NOTE: If a front matter must be present in the input data, use

+ // frontmatter.MustParse instead.

+

+ fmt.Printf("%+v\n", matter)

+ fmt.Println(string(rest))

+

+ // Output:

+ // {Name:frontmatter Tags:[go yaml json toml]}

+ // rest of the content

+}

+```

+

+Full documentation can be found at: https://pkg.go.dev/github.com/adrg/frontmatter.

+

+## Stargazers over time

+

+[](https://starchart.cc/adrg/frontmatter)

+

+## Contributing

+

+Contributions in the form of pull requests, issues or just general feedback,

+are always welcome.

+See [CONTRIBUTING.MD](CONTRIBUTING.md).

+

+## Buy me a coffee

+

+If you found this project useful and want to support it, consider buying me a coffee.

+

+  +

+

+## License

+

+Copyright (c) 2020 Adrian-George Bostan.

+

+This project is licensed under the [MIT license](https://opensource.org/licenses/MIT).

+See [LICENSE](LICENSE) for more details.

diff --git a/vendor/github.com/adrg/frontmatter/format.go b/vendor/github.com/adrg/frontmatter/format.go

new file mode 100644

index 0000000..e7d2a9f

--- /dev/null

+++ b/vendor/github.com/adrg/frontmatter/format.go

@@ -0,0 +1,70 @@

+package frontmatter

+

+import (

+ "encoding/json"

+

+ "github.com/BurntSushi/toml"

+ "gopkg.in/yaml.v2"

+)

+

+// UnmarshalFunc decodes the passed in `data` and stores it into

+// the value pointed to by `v`.

+type UnmarshalFunc func(data []byte, v interface{}) error

+

+// Format describes a front matter. It holds all the information

+// necessary in order to detect and decode a front matter format.

+type Format struct {

+ // Start defines the starting delimiter of the front matter.

+ // E.g.: `---` or `---yaml`.

+ Start string

+

+ // End defines the ending delimiter of the front matter.

+ // E.g.: `---`.

+ End string

+

+ // Unmarshal defines the unmarshal function used to decode

+ // the front matter data, after it has been detected.

+ // E.g.: json.Unmarshal (from the `encoding/json` package).

+ Unmarshal UnmarshalFunc

+

+ // UnmarshalDelims specifies whether the front matter

+ // delimiters are included in the data to be unmarshaled.

+ // Should be `false` in most cases.

+ UnmarshalDelims bool

+

+ // RequiresNewLine specifies whether a new (empty) line is

+ // required after the front matter.

+ // Should be `false` in most cases.

+ RequiresNewLine bool

+}

+

+// NewFormat returns a new front matter format.

+func NewFormat(start, end string, unmarshal UnmarshalFunc) *Format {

+ return newFormat(start, end, unmarshal, false, false)

+}

+

+func newFormat(start, end string, unmarshal UnmarshalFunc,

+ unmarshalDelims, requiresNewLine bool) *Format {

+ return &Format{

+ Start: start,

+ End: end,

+ Unmarshal: unmarshal,

+ UnmarshalDelims: unmarshalDelims,

+ RequiresNewLine: requiresNewLine,

+ }

+}

+

+func defaultFormats() []*Format {

+ return []*Format{

+ // YAML.

+ newFormat("---", "---", yaml.Unmarshal, false, false),

+ newFormat("---yaml", "---", yaml.Unmarshal, false, false),

+ // TOML.

+ newFormat("+++", "+++", toml.Unmarshal, false, false),

+ newFormat("---toml", "---", toml.Unmarshal, false, false),

+ // JSON.

+ newFormat(";;;", ";;;", json.Unmarshal, false, false),

+ newFormat("---json", "---", json.Unmarshal, false, false),

+ newFormat("{", "}", json.Unmarshal, true, true),

+ }

+}

diff --git a/vendor/github.com/adrg/frontmatter/frontmatter.go b/vendor/github.com/adrg/frontmatter/frontmatter.go

new file mode 100644

index 0000000..b93a9cd

--- /dev/null

+++ b/vendor/github.com/adrg/frontmatter/frontmatter.go

@@ -0,0 +1,47 @@

+/*

+Package frontmatter implements detection and decoding for various content

+front matter formats.

+

+ The following front matter formats are supported by default.

+

+ - YAML identified by:

+ • opening and closing `---` lines.

+ • opening `---yaml` and closing `---` lines.

+ - TOML identified by:

+ • opening and closing `+++` lines.

+ • opening `---toml` and closing `---` lines.

+ - JSON identified by:

+ • opening and closing `;;;` lines.

+ • opening `---json` and closing `---` lines.

+ • a single JSON object followed by an empty line.

+

+If the default formats are not suitable for your use case, you can easily bring

+your own. See the examples for more information.

+*/

+package frontmatter

+

+import (

+ "errors"

+ "io"

+)

+

+// ErrNotFound is reported by `MustParse` when a front matter is not found.

+var ErrNotFound = errors.New("not found")

+

+// Parse decodes the front matter from the specified reader into the value

+// pointed to by `v`, and returns the rest of the data. If a front matter

+// is not present, the original data is returned and `v` is left unchanged.

+// Front matters are detected and decoded based on the passed in `formats`.

+// If no formats are provided, the default formats are used.

+func Parse(r io.Reader, v interface{}, formats ...*Format) ([]byte, error) {

+ return newParser(r).parse(v, formats, false)

+}

+

+// MustParse decodes the front matter from the specified reader into the

+// value pointed to by `v`, and returns the rest of the data. If a front

+// matter is not present, `ErrNotFound` is reported.

+// Front matters are detected and decoded based on the passed in `formats`.

+// If no formats are provided, the default formats are used.

+func MustParse(r io.Reader, v interface{}, formats ...*Format) ([]byte, error) {

+ return newParser(r).parse(v, formats, true)

+}

diff --git a/vendor/github.com/adrg/frontmatter/parser.go b/vendor/github.com/adrg/frontmatter/parser.go

new file mode 100644

index 0000000..1034f82

--- /dev/null

+++ b/vendor/github.com/adrg/frontmatter/parser.go

@@ -0,0 +1,132 @@

+package frontmatter

+

+import (

+ "bufio"

+ "bytes"

+ "io"

+)

+

+type parser struct {

+ reader *bufio.Reader

+ output *bytes.Buffer

+

+ read int

+ start int

+ end int

+}

+

+func newParser(r io.Reader) *parser {

+ return &parser{

+ reader: bufio.NewReader(r),

+ output: bytes.NewBuffer(nil),

+ }

+}

+

+func (p *parser) parse(v interface{}, formats []*Format,

+ mustParse bool) ([]byte, error) {

+ // If no formats are provided, use the default ones.

+ if len(formats) == 0 {

+ formats = defaultFormats()

+ }

+

+ // Detect format.

+ f, err := p.detect(formats)

+ if err != nil {

+ return nil, err

+ }

+

+ // Extract front matter.

+ found := f != nil

+ if found {

+ if found, err = p.extract(f, v); err != nil {

+ return nil, err

+ }

+ }

+ if mustParse && !found {

+ return nil, ErrNotFound

+ }

+

+ // Read remaining data.

+ if _, err := p.output.ReadFrom(p.reader); err != nil {

+ return nil, err

+ }

+

+ return p.output.Bytes()[p.end:], nil

+}

+

+func (p *parser) detect(formats []*Format) (*Format, error) {

+ for {

+ read := p.read

+

+ line, atEOF, err := p.readLine()

+ if err != nil || atEOF {

+ return nil, err

+ }

+ if line == "" {

+ continue

+ }

+

+ for _, f := range formats {

+ if f.Start == line {

+ if !f.UnmarshalDelims {

+ read = p.read

+ }

+

+ p.start = read

+ return f, nil

+ }

+ }

+

+ return nil, nil

+ }

+}

+

+func (p *parser) extract(f *Format, v interface{}) (bool, error) {

+ for {

+ read := p.read

+

+ line, atEOF, err := p.readLine()

+ if err != nil {

+ return false, err

+ }

+

+ CheckLine:

+ if line != f.End {

+ if atEOF {

+ return false, err

+ }

+ continue

+ }

+ if f.RequiresNewLine {

+ if line, atEOF, err = p.readLine(); err != nil {

+ return false, err

+ }

+ if line != "" {

+ goto CheckLine

+ }

+ }

+ if f.UnmarshalDelims {

+ read = p.read

+ }

+

+ if err := f.Unmarshal(p.output.Bytes()[p.start:read], v); err != nil {

+ return false, err

+ }

+

+ p.end = p.read

+ return true, nil

+ }

+}

+

+func (p *parser) readLine() (string, bool, error) {

+ line, err := p.reader.ReadBytes('\n')

+

+ atEOF := err == io.EOF

+ if err != nil && !atEOF {

+ return "", false, err

+ }

+

+ p.read += len(line)

+ _, err = p.output.Write(line)

+ return string(bytes.TrimSpace(line)), atEOF, err

+}

diff --git a/vendor/github.com/google/go-cmp/LICENSE b/vendor/github.com/google/go-cmp/LICENSE

new file mode 100644

index 0000000..32017f8

--- /dev/null

+++ b/vendor/github.com/google/go-cmp/LICENSE

@@ -0,0 +1,27 @@

+Copyright (c) 2017 The Go Authors. All rights reserved.

+

+Redistribution and use in source and binary forms, with or without

+modification, are permitted provided that the following conditions are

+met:

+

+ * Redistributions of source code must retain the above copyright

+notice, this list of conditions and the following disclaimer.

+ * Redistributions in binary form must reproduce the above

+copyright notice, this list of conditions and the following disclaimer

+in the documentation and/or other materials provided with the

+distribution.

+ * Neither the name of Google Inc. nor the names of its

+contributors may be used to endorse or promote products derived from

+this software without specific prior written permission.

+

+THIS SOFTWARE IS PROVIDED BY THE COPYRIGHT HOLDERS AND CONTRIBUTORS

+"AS IS" AND ANY EXPRESS OR IMPLIED WARRANTIES, INCLUDING, BUT NOT

+LIMITED TO, THE IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR

+A PARTICULAR PURPOSE ARE DISCLAIMED. IN NO EVENT SHALL THE COPYRIGHT

+OWNER OR CONTRIBUTORS BE LIABLE FOR ANY DIRECT, INDIRECT, INCIDENTAL,

+SPECIAL, EXEMPLARY, OR CONSEQUENTIAL DAMAGES (INCLUDING, BUT NOT

+LIMITED TO, PROCUREMENT OF SUBSTITUTE GOODS OR SERVICES; LOSS OF USE,

+DATA, OR PROFITS; OR BUSINESS INTERRUPTION) HOWEVER CAUSED AND ON ANY

+THEORY OF LIABILITY, WHETHER IN CONTRACT, STRICT LIABILITY, OR TORT

+(INCLUDING NEGLIGENCE OR OTHERWISE) ARISING IN ANY WAY OUT OF THE USE

+OF THIS SOFTWARE, EVEN IF ADVISED OF THE POSSIBILITY OF SUCH DAMAGE.

diff --git a/vendor/github.com/google/go-cmp/cmp/compare.go b/vendor/github.com/google/go-cmp/cmp/compare.go

new file mode 100644

index 0000000..0f5b8a4

--- /dev/null

+++ b/vendor/github.com/google/go-cmp/cmp/compare.go

@@ -0,0 +1,671 @@

+// Copyright 2017, The Go Authors. All rights reserved.

+// Use of this source code is governed by a BSD-style

+// license that can be found in the LICENSE file.

+

+// Package cmp determines equality of values.

+//

+// This package is intended to be a more powerful and safer alternative to

+// [reflect.DeepEqual] for comparing whether two values are semantically equal.

+// It is intended to only be used in tests, as performance is not a goal and

+// it may panic if it cannot compare the values. Its propensity towards

+// panicking means that its unsuitable for production environments where a

+// spurious panic may be fatal.

+//

+// The primary features of cmp are:

+//

+// - When the default behavior of equality does not suit the test's needs,

+// custom equality functions can override the equality operation.

+// For example, an equality function may report floats as equal so long as

+// they are within some tolerance of each other.

+//

+// - Types with an Equal method (e.g., [time.Time.Equal]) may use that method

+// to determine equality. This allows package authors to determine

+// the equality operation for the types that they define.

+//

+// - If no custom equality functions are used and no Equal method is defined,

+// equality is determined by recursively comparing the primitive kinds on

+// both values, much like [reflect.DeepEqual]. Unlike [reflect.DeepEqual],

+// unexported fields are not compared by default; they result in panics

+// unless suppressed by using an [Ignore] option

+// (see [github.com/google/go-cmp/cmp/cmpopts.IgnoreUnexported])

+// or explicitly compared using the [Exporter] option.

+package cmp

+

+import (

+ "fmt"

+ "reflect"

+ "strings"

+

+ "github.com/google/go-cmp/cmp/internal/diff"

+ "github.com/google/go-cmp/cmp/internal/function"

+ "github.com/google/go-cmp/cmp/internal/value"

+)

+

+// TODO(≥go1.18): Use any instead of interface{}.

+

+// Equal reports whether x and y are equal by recursively applying the

+// following rules in the given order to x and y and all of their sub-values:

+//

+// - Let S be the set of all [Ignore], [Transformer], and [Comparer] options that

+// remain after applying all path filters, value filters, and type filters.

+// If at least one [Ignore] exists in S, then the comparison is ignored.

+// If the number of [Transformer] and [Comparer] options in S is non-zero,

+// then Equal panics because it is ambiguous which option to use.

+// If S contains a single [Transformer], then use that to transform

+// the current values and recursively call Equal on the output values.

+// If S contains a single [Comparer], then use that to compare the current values.

+// Otherwise, evaluation proceeds to the next rule.

+//

+// - If the values have an Equal method of the form "(T) Equal(T) bool" or

+// "(T) Equal(I) bool" where T is assignable to I, then use the result of

+// x.Equal(y) even if x or y is nil. Otherwise, no such method exists and

+// evaluation proceeds to the next rule.

+//

+// - Lastly, try to compare x and y based on their basic kinds.

+// Simple kinds like booleans, integers, floats, complex numbers, strings,

+// and channels are compared using the equivalent of the == operator in Go.

+// Functions are only equal if they are both nil, otherwise they are unequal.

+//

+// Structs are equal if recursively calling Equal on all fields report equal.

+// If a struct contains unexported fields, Equal panics unless an [Ignore] option

+// (e.g., [github.com/google/go-cmp/cmp/cmpopts.IgnoreUnexported]) ignores that field

+// or the [Exporter] option explicitly permits comparing the unexported field.

+//

+// Slices are equal if they are both nil or both non-nil, where recursively

+// calling Equal on all non-ignored slice or array elements report equal.

+// Empty non-nil slices and nil slices are not equal; to equate empty slices,

+// consider using [github.com/google/go-cmp/cmp/cmpopts.EquateEmpty].

+//

+// Maps are equal if they are both nil or both non-nil, where recursively

+// calling Equal on all non-ignored map entries report equal.

+// Map keys are equal according to the == operator.

+// To use custom comparisons for map keys, consider using

+// [github.com/google/go-cmp/cmp/cmpopts.SortMaps].

+// Empty non-nil maps and nil maps are not equal; to equate empty maps,

+// consider using [github.com/google/go-cmp/cmp/cmpopts.EquateEmpty].

+//

+// Pointers and interfaces are equal if they are both nil or both non-nil,

+// where they have the same underlying concrete type and recursively

+// calling Equal on the underlying values reports equal.

+//

+// Before recursing into a pointer, slice element, or map, the current path

+// is checked to detect whether the address has already been visited.

+// If there is a cycle, then the pointed at values are considered equal

+// only if both addresses were previously visited in the same path step.

+func Equal(x, y interface{}, opts ...Option) bool {

+ s := newState(opts)

+ s.compareAny(rootStep(x, y))

+ return s.result.Equal()

+}

+

+// Diff returns a human-readable report of the differences between two values:

+// y - x. It returns an empty string if and only if Equal returns true for the

+// same input values and options.

+//

+// The output is displayed as a literal in pseudo-Go syntax.

+// At the start of each line, a "-" prefix indicates an element removed from x,

+// a "+" prefix to indicates an element added from y, and the lack of a prefix

+// indicates an element common to both x and y. If possible, the output

+// uses fmt.Stringer.String or error.Error methods to produce more humanly

+// readable outputs. In such cases, the string is prefixed with either an

+// 's' or 'e' character, respectively, to indicate that the method was called.

+//

+// Do not depend on this output being stable. If you need the ability to

+// programmatically interpret the difference, consider using a custom Reporter.

+func Diff(x, y interface{}, opts ...Option) string {

+ s := newState(opts)

+

+ // Optimization: If there are no other reporters, we can optimize for the

+ // common case where the result is equal (and thus no reported difference).

+ // This avoids the expensive construction of a difference tree.

+ if len(s.reporters) == 0 {

+ s.compareAny(rootStep(x, y))

+ if s.result.Equal() {

+ return ""

+ }

+ s.result = diff.Result{} // Reset results

+ }

+

+ r := new(defaultReporter)

+ s.reporters = append(s.reporters, reporter{r})

+ s.compareAny(rootStep(x, y))

+ d := r.String()

+ if (d == "") != s.result.Equal() {

+ panic("inconsistent difference and equality results")

+ }

+ return d

+}

+

+// rootStep constructs the first path step. If x and y have differing types,

+// then they are stored within an empty interface type.

+func rootStep(x, y interface{}) PathStep {

+ vx := reflect.ValueOf(x)

+ vy := reflect.ValueOf(y)

+

+ // If the inputs are different types, auto-wrap them in an empty interface

+ // so that they have the same parent type.

+ var t reflect.Type

+ if !vx.IsValid() || !vy.IsValid() || vx.Type() != vy.Type() {

+ t = anyType

+ if vx.IsValid() {

+ vvx := reflect.New(t).Elem()

+ vvx.Set(vx)

+ vx = vvx

+ }

+ if vy.IsValid() {

+ vvy := reflect.New(t).Elem()

+ vvy.Set(vy)

+ vy = vvy

+ }

+ } else {

+ t = vx.Type()

+ }

+

+ return &pathStep{t, vx, vy}

+}

+

+type state struct {

+ // These fields represent the "comparison state".

+ // Calling statelessCompare must not result in observable changes to these.

+ result diff.Result // The current result of comparison

+ curPath Path // The current path in the value tree

+ curPtrs pointerPath // The current set of visited pointers

+ reporters []reporter // Optional reporters

+

+ // recChecker checks for infinite cycles applying the same set of

+ // transformers upon the output of itself.

+ recChecker recChecker

+

+ // dynChecker triggers pseudo-random checks for option correctness.

+ // It is safe for statelessCompare to mutate this value.

+ dynChecker dynChecker

+

+ // These fields, once set by processOption, will not change.

+ exporters []exporter // List of exporters for structs with unexported fields

+ opts Options // List of all fundamental and filter options

+}

+

+func newState(opts []Option) *state {

+ // Always ensure a validator option exists to validate the inputs.

+ s := &state{opts: Options{validator{}}}

+ s.curPtrs.Init()

+ s.processOption(Options(opts))

+ return s

+}

+

+func (s *state) processOption(opt Option) {

+ switch opt := opt.(type) {

+ case nil:

+ case Options:

+ for _, o := range opt {

+ s.processOption(o)

+ }

+ case coreOption:

+ type filtered interface {

+ isFiltered() bool

+ }

+ if fopt, ok := opt.(filtered); ok && !fopt.isFiltered() {

+ panic(fmt.Sprintf("cannot use an unfiltered option: %v", opt))

+ }

+ s.opts = append(s.opts, opt)

+ case exporter:

+ s.exporters = append(s.exporters, opt)

+ case reporter:

+ s.reporters = append(s.reporters, opt)

+ default:

+ panic(fmt.Sprintf("unknown option %T", opt))

+ }

+}

+

+// statelessCompare compares two values and returns the result.

+// This function is stateless in that it does not alter the current result,

+// or output to any registered reporters.

+func (s *state) statelessCompare(step PathStep) diff.Result {

+ // We do not save and restore curPath and curPtrs because all of the

+ // compareX methods should properly push and pop from them.

+ // It is an implementation bug if the contents of the paths differ from

+ // when calling this function to when returning from it.

+

+ oldResult, oldReporters := s.result, s.reporters

+ s.result = diff.Result{} // Reset result

+ s.reporters = nil // Remove reporters to avoid spurious printouts

+ s.compareAny(step)

+ res := s.result

+ s.result, s.reporters = oldResult, oldReporters

+ return res

+}

+

+func (s *state) compareAny(step PathStep) {

+ // Update the path stack.

+ s.curPath.push(step)

+ defer s.curPath.pop()

+ for _, r := range s.reporters {

+ r.PushStep(step)

+ defer r.PopStep()

+ }

+ s.recChecker.Check(s.curPath)

+

+ // Cycle-detection for slice elements (see NOTE in compareSlice).

+ t := step.Type()

+ vx, vy := step.Values()

+ if si, ok := step.(SliceIndex); ok && si.isSlice && vx.IsValid() && vy.IsValid() {

+ px, py := vx.Addr(), vy.Addr()

+ if eq, visited := s.curPtrs.Push(px, py); visited {

+ s.report(eq, reportByCycle)

+ return

+ }

+ defer s.curPtrs.Pop(px, py)

+ }

+

+ // Rule 1: Check whether an option applies on this node in the value tree.

+ if s.tryOptions(t, vx, vy) {

+ return

+ }

+

+ // Rule 2: Check whether the type has a valid Equal method.

+ if s.tryMethod(t, vx, vy) {

+ return

+ }

+

+ // Rule 3: Compare based on the underlying kind.

+ switch t.Kind() {

+ case reflect.Bool:

+ s.report(vx.Bool() == vy.Bool(), 0)

+ case reflect.Int, reflect.Int8, reflect.Int16, reflect.Int32, reflect.Int64:

+ s.report(vx.Int() == vy.Int(), 0)

+ case reflect.Uint, reflect.Uint8, reflect.Uint16, reflect.Uint32, reflect.Uint64, reflect.Uintptr:

+ s.report(vx.Uint() == vy.Uint(), 0)

+ case reflect.Float32, reflect.Float64:

+ s.report(vx.Float() == vy.Float(), 0)

+ case reflect.Complex64, reflect.Complex128:

+ s.report(vx.Complex() == vy.Complex(), 0)

+ case reflect.String:

+ s.report(vx.String() == vy.String(), 0)

+ case reflect.Chan, reflect.UnsafePointer:

+ s.report(vx.Pointer() == vy.Pointer(), 0)

+ case reflect.Func:

+ s.report(vx.IsNil() && vy.IsNil(), 0)

+ case reflect.Struct:

+ s.compareStruct(t, vx, vy)

+ case reflect.Slice, reflect.Array:

+ s.compareSlice(t, vx, vy)

+ case reflect.Map:

+ s.compareMap(t, vx, vy)

+ case reflect.Ptr:

+ s.comparePtr(t, vx, vy)

+ case reflect.Interface:

+ s.compareInterface(t, vx, vy)

+ default:

+ panic(fmt.Sprintf("%v kind not handled", t.Kind()))

+ }

+}

+

+func (s *state) tryOptions(t reflect.Type, vx, vy reflect.Value) bool {

+ // Evaluate all filters and apply the remaining options.

+ if opt := s.opts.filter(s, t, vx, vy); opt != nil {

+ opt.apply(s, vx, vy)

+ return true

+ }

+ return false

+}

+

+func (s *state) tryMethod(t reflect.Type, vx, vy reflect.Value) bool {

+ // Check if this type even has an Equal method.

+ m, ok := t.MethodByName("Equal")

+ if !ok || !function.IsType(m.Type, function.EqualAssignable) {

+ return false

+ }

+

+ eq := s.callTTBFunc(m.Func, vx, vy)

+ s.report(eq, reportByMethod)

+ return true

+}

+

+func (s *state) callTRFunc(f, v reflect.Value, step Transform) reflect.Value {

+ if !s.dynChecker.Next() {

+ return f.Call([]reflect.Value{v})[0]

+ }

+

+ // Run the function twice and ensure that we get the same results back.

+ // We run in goroutines so that the race detector (if enabled) can detect

+ // unsafe mutations to the input.

+ c := make(chan reflect.Value)

+ go detectRaces(c, f, v)

+ got := <-c

+ want := f.Call([]reflect.Value{v})[0]

+ if step.vx, step.vy = got, want; !s.statelessCompare(step).Equal() {

+ // To avoid false-positives with non-reflexive equality operations,

+ // we sanity check whether a value is equal to itself.

+ if step.vx, step.vy = want, want; !s.statelessCompare(step).Equal() {

+ return want

+ }

+ panic(fmt.Sprintf("non-deterministic function detected: %s", function.NameOf(f)))

+ }

+ return want

+}

+

+func (s *state) callTTBFunc(f, x, y reflect.Value) bool {

+ if !s.dynChecker.Next() {

+ return f.Call([]reflect.Value{x, y})[0].Bool()

+ }

+

+ // Swapping the input arguments is sufficient to check that

+ // f is symmetric and deterministic.

+ // We run in goroutines so that the race detector (if enabled) can detect

+ // unsafe mutations to the input.

+ c := make(chan reflect.Value)

+ go detectRaces(c, f, y, x)

+ got := <-c

+ want := f.Call([]reflect.Value{x, y})[0].Bool()

+ if !got.IsValid() || got.Bool() != want {

+ panic(fmt.Sprintf("non-deterministic or non-symmetric function detected: %s", function.NameOf(f)))

+ }

+ return want

+}

+

+func detectRaces(c chan<- reflect.Value, f reflect.Value, vs ...reflect.Value) {

+ var ret reflect.Value

+ defer func() {

+ recover() // Ignore panics, let the other call to f panic instead

+ c <- ret

+ }()

+ ret = f.Call(vs)[0]

+}

+

+func (s *state) compareStruct(t reflect.Type, vx, vy reflect.Value) {

+ var addr bool

+ var vax, vay reflect.Value // Addressable versions of vx and vy

+

+ var mayForce, mayForceInit bool

+ step := StructField{&structField{}}

+ for i := 0; i < t.NumField(); i++ {

+ step.typ = t.Field(i).Type

+ step.vx = vx.Field(i)

+ step.vy = vy.Field(i)

+ step.name = t.Field(i).Name

+ step.idx = i

+ step.unexported = !isExported(step.name)

+ if step.unexported {

+ if step.name == "_" {

+ continue

+ }

+ // Defer checking of unexported fields until later to give an

+ // Ignore a chance to ignore the field.

+ if !vax.IsValid() || !vay.IsValid() {

+ // For retrieveUnexportedField to work, the parent struct must

+ // be addressable. Create a new copy of the values if

+ // necessary to make them addressable.

+ addr = vx.CanAddr() || vy.CanAddr()

+ vax = makeAddressable(vx)

+ vay = makeAddressable(vy)

+ }

+ if !mayForceInit {

+ for _, xf := range s.exporters {

+ mayForce = mayForce || xf(t)

+ }

+ mayForceInit = true

+ }

+ step.mayForce = mayForce

+ step.paddr = addr

+ step.pvx = vax

+ step.pvy = vay

+ step.field = t.Field(i)

+ }

+ s.compareAny(step)

+ }

+}

+

+func (s *state) compareSlice(t reflect.Type, vx, vy reflect.Value) {

+ isSlice := t.Kind() == reflect.Slice

+ if isSlice && (vx.IsNil() || vy.IsNil()) {

+ s.report(vx.IsNil() && vy.IsNil(), 0)

+ return

+ }

+

+ // NOTE: It is incorrect to call curPtrs.Push on the slice header pointer

+ // since slices represents a list of pointers, rather than a single pointer.

+ // The pointer checking logic must be handled on a per-element basis

+ // in compareAny.

+ //

+ // A slice header (see reflect.SliceHeader) in Go is a tuple of a starting

+ // pointer P, a length N, and a capacity C. Supposing each slice element has

+ // a memory size of M, then the slice is equivalent to the list of pointers:

+ // [P+i*M for i in range(N)]

+ //

+ // For example, v[:0] and v[:1] are slices with the same starting pointer,

+ // but they are clearly different values. Using the slice pointer alone

+ // violates the assumption that equal pointers implies equal values.

+

+ step := SliceIndex{&sliceIndex{pathStep: pathStep{typ: t.Elem()}, isSlice: isSlice}}

+ withIndexes := func(ix, iy int) SliceIndex {

+ if ix >= 0 {

+ step.vx, step.xkey = vx.Index(ix), ix

+ } else {

+ step.vx, step.xkey = reflect.Value{}, -1

+ }

+ if iy >= 0 {

+ step.vy, step.ykey = vy.Index(iy), iy

+ } else {

+ step.vy, step.ykey = reflect.Value{}, -1

+ }

+ return step

+ }

+

+ // Ignore options are able to ignore missing elements in a slice.

+ // However, detecting these reliably requires an optimal differencing

+ // algorithm, for which diff.Difference is not.

+ //

+ // Instead, we first iterate through both slices to detect which elements

+ // would be ignored if standing alone. The index of non-discarded elements

+ // are stored in a separate slice, which diffing is then performed on.

+ var indexesX, indexesY []int

+ var ignoredX, ignoredY []bool

+ for ix := 0; ix < vx.Len(); ix++ {

+ ignored := s.statelessCompare(withIndexes(ix, -1)).NumDiff == 0

+ if !ignored {

+ indexesX = append(indexesX, ix)

+ }

+ ignoredX = append(ignoredX, ignored)

+ }

+ for iy := 0; iy < vy.Len(); iy++ {

+ ignored := s.statelessCompare(withIndexes(-1, iy)).NumDiff == 0

+ if !ignored {

+ indexesY = append(indexesY, iy)

+ }

+ ignoredY = append(ignoredY, ignored)

+ }

+

+ // Compute an edit-script for slices vx and vy (excluding ignored elements).

+ edits := diff.Difference(len(indexesX), len(indexesY), func(ix, iy int) diff.Result {

+ return s.statelessCompare(withIndexes(indexesX[ix], indexesY[iy]))

+ })

+

+ // Replay the ignore-scripts and the edit-script.

+ var ix, iy int

+ for ix < vx.Len() || iy < vy.Len() {

+ var e diff.EditType

+ switch {

+ case ix < len(ignoredX) && ignoredX[ix]:

+ e = diff.UniqueX

+ case iy < len(ignoredY) && ignoredY[iy]:

+ e = diff.UniqueY

+ default:

+ e, edits = edits[0], edits[1:]

+ }

+ switch e {

+ case diff.UniqueX:

+ s.compareAny(withIndexes(ix, -1))

+ ix++

+ case diff.UniqueY:

+ s.compareAny(withIndexes(-1, iy))

+ iy++

+ default:

+ s.compareAny(withIndexes(ix, iy))

+ ix++

+ iy++

+ }

+ }

+}

+

+func (s *state) compareMap(t reflect.Type, vx, vy reflect.Value) {

+ if vx.IsNil() || vy.IsNil() {

+ s.report(vx.IsNil() && vy.IsNil(), 0)

+ return

+ }

+

+ // Cycle-detection for maps.

+ if eq, visited := s.curPtrs.Push(vx, vy); visited {

+ s.report(eq, reportByCycle)

+ return

+ }

+ defer s.curPtrs.Pop(vx, vy)

+

+ // We combine and sort the two map keys so that we can perform the

+ // comparisons in a deterministic order.

+ step := MapIndex{&mapIndex{pathStep: pathStep{typ: t.Elem()}}}

+ for _, k := range value.SortKeys(append(vx.MapKeys(), vy.MapKeys()...)) {

+ step.vx = vx.MapIndex(k)

+ step.vy = vy.MapIndex(k)

+ step.key = k

+ if !step.vx.IsValid() && !step.vy.IsValid() {

+ // It is possible for both vx and vy to be invalid if the

+ // key contained a NaN value in it.

+ //

+ // Even with the ability to retrieve NaN keys in Go 1.12,

+ // there still isn't a sensible way to compare the values since

+ // a NaN key may map to multiple unordered values.

+ // The most reasonable way to compare NaNs would be to compare the

+ // set of values. However, this is impossible to do efficiently

+ // since set equality is provably an O(n^2) operation given only

+ // an Equal function. If we had a Less function or Hash function,

+ // this could be done in O(n*log(n)) or O(n), respectively.

+ //

+ // Rather than adding complex logic to deal with NaNs, make it

+ // the user's responsibility to compare such obscure maps.

+ const help = "consider providing a Comparer to compare the map"

+ panic(fmt.Sprintf("%#v has map key with NaNs\n%s", s.curPath, help))

+ }

+ s.compareAny(step)

+ }

+}

+

+func (s *state) comparePtr(t reflect.Type, vx, vy reflect.Value) {

+ if vx.IsNil() || vy.IsNil() {

+ s.report(vx.IsNil() && vy.IsNil(), 0)

+ return

+ }

+

+ // Cycle-detection for pointers.

+ if eq, visited := s.curPtrs.Push(vx, vy); visited {

+ s.report(eq, reportByCycle)

+ return

+ }

+ defer s.curPtrs.Pop(vx, vy)

+

+ vx, vy = vx.Elem(), vy.Elem()

+ s.compareAny(Indirect{&indirect{pathStep{t.Elem(), vx, vy}}})

+}

+

+func (s *state) compareInterface(t reflect.Type, vx, vy reflect.Value) {

+ if vx.IsNil() || vy.IsNil() {

+ s.report(vx.IsNil() && vy.IsNil(), 0)

+ return

+ }

+ vx, vy = vx.Elem(), vy.Elem()

+ if vx.Type() != vy.Type() {

+ s.report(false, 0)

+ return

+ }

+ s.compareAny(TypeAssertion{&typeAssertion{pathStep{vx.Type(), vx, vy}}})

+}

+

+func (s *state) report(eq bool, rf resultFlags) {

+ if rf&reportByIgnore == 0 {

+ if eq {

+ s.result.NumSame++

+ rf |= reportEqual

+ } else {

+ s.result.NumDiff++

+ rf |= reportUnequal

+ }

+ }

+ for _, r := range s.reporters {

+ r.Report(Result{flags: rf})

+ }

+}

+

+// recChecker tracks the state needed to periodically perform checks that

+// user provided transformers are not stuck in an infinitely recursive cycle.

+type recChecker struct{ next int }

+

+// Check scans the Path for any recursive transformers and panics when any

+// recursive transformers are detected. Note that the presence of a

+// recursive Transformer does not necessarily imply an infinite cycle.

+// As such, this check only activates after some minimal number of path steps.

+func (rc *recChecker) Check(p Path) {

+ const minLen = 1 << 16

+ if rc.next == 0 {

+ rc.next = minLen

+ }

+ if len(p) < rc.next {

+ return

+ }

+ rc.next <<= 1

+

+ // Check whether the same transformer has appeared at least twice.

+ var ss []string

+ m := map[Option]int{}

+ for _, ps := range p {

+ if t, ok := ps.(Transform); ok {

+ t := t.Option()

+ if m[t] == 1 { // Transformer was used exactly once before

+ tf := t.(*transformer).fnc.Type()

+ ss = append(ss, fmt.Sprintf("%v: %v => %v", t, tf.In(0), tf.Out(0)))

+ }

+ m[t]++

+ }

+ }

+ if len(ss) > 0 {

+ const warning = "recursive set of Transformers detected"

+ const help = "consider using cmpopts.AcyclicTransformer"

+ set := strings.Join(ss, "\n\t")

+ panic(fmt.Sprintf("%s:\n\t%s\n%s", warning, set, help))

+ }

+}

+

+// dynChecker tracks the state needed to periodically perform checks that

+// user provided functions are symmetric and deterministic.

+// The zero value is safe for immediate use.

+type dynChecker struct{ curr, next int }

+

+// Next increments the state and reports whether a check should be performed.

+//

+// Checks occur every Nth function call, where N is a triangular number:

+//

+// 0 1 3 6 10 15 21 28 36 45 55 66 78 91 105 120 136 153 171 190 ...

+//

+// See https://en.wikipedia.org/wiki/Triangular_number

+//

+// This sequence ensures that the cost of checks drops significantly as

+// the number of functions calls grows larger.

+func (dc *dynChecker) Next() bool {

+ ok := dc.curr == dc.next

+ if ok {

+ dc.curr = 0

+ dc.next++

+ }

+ dc.curr++

+ return ok

+}

+

+// makeAddressable returns a value that is always addressable.

+// It returns the input verbatim if it is already addressable,

+// otherwise it creates a new value and returns an addressable copy.

+func makeAddressable(v reflect.Value) reflect.Value {

+ if v.CanAddr() {

+ return v

+ }

+ vc := reflect.New(v.Type()).Elem()

+ vc.Set(v)

+ return vc

+}

diff --git a/vendor/github.com/google/go-cmp/cmp/export.go b/vendor/github.com/google/go-cmp/cmp/export.go

new file mode 100644

index 0000000..29f82fe

--- /dev/null

+++ b/vendor/github.com/google/go-cmp/cmp/export.go

@@ -0,0 +1,31 @@

+// Copyright 2017, The Go Authors. All rights reserved.

+// Use of this source code is governed by a BSD-style

+// license that can be found in the LICENSE file.

+

+package cmp

+

+import (

+ "reflect"

+ "unsafe"

+)

+

+// retrieveUnexportedField uses unsafe to forcibly retrieve any field from

+// a struct such that the value has read-write permissions.

+//

+// The parent struct, v, must be addressable, while f must be a StructField

+// describing the field to retrieve. If addr is false,

+// then the returned value will be shallowed copied to be non-addressable.

+func retrieveUnexportedField(v reflect.Value, f reflect.StructField, addr bool) reflect.Value {

+ ve := reflect.NewAt(f.Type, unsafe.Pointer(uintptr(unsafe.Pointer(v.UnsafeAddr()))+f.Offset)).Elem()

+ if !addr {

+ // A field is addressable if and only if the struct is addressable.

+ // If the original parent value was not addressable, shallow copy the

+ // value to make it non-addressable to avoid leaking an implementation

+ // detail of how forcibly exporting a field works.

+ if ve.Kind() == reflect.Interface && ve.IsNil() {

+ return reflect.Zero(f.Type)

+ }

+ return reflect.ValueOf(ve.Interface()).Convert(f.Type)

+ }

+ return ve

+}

diff --git a/vendor/github.com/google/go-cmp/cmp/internal/diff/debug_disable.go b/vendor/github.com/google/go-cmp/cmp/internal/diff/debug_disable.go

new file mode 100644

index 0000000..36062a6

--- /dev/null

+++ b/vendor/github.com/google/go-cmp/cmp/internal/diff/debug_disable.go

@@ -0,0 +1,18 @@

+// Copyright 2017, The Go Authors. All rights reserved.

+// Use of this source code is governed by a BSD-style

+// license that can be found in the LICENSE file.

+

+//go:build !cmp_debug

+// +build !cmp_debug

+

+package diff

+

+var debug debugger

+

+type debugger struct{}

+

+func (debugger) Begin(_, _ int, f EqualFunc, _, _ *EditScript) EqualFunc {

+ return f

+}

+func (debugger) Update() {}

+func (debugger) Finish() {}

diff --git a/vendor/github.com/google/go-cmp/cmp/internal/diff/debug_enable.go b/vendor/github.com/google/go-cmp/cmp/internal/diff/debug_enable.go

new file mode 100644

index 0000000..a3b97a1

--- /dev/null

+++ b/vendor/github.com/google/go-cmp/cmp/internal/diff/debug_enable.go

@@ -0,0 +1,123 @@

+// Copyright 2017, The Go Authors. All rights reserved.

+// Use of this source code is governed by a BSD-style

+// license that can be found in the LICENSE file.

+

+//go:build cmp_debug

+// +build cmp_debug

+

+package diff

+

+import (

+ "fmt"

+ "strings"

+ "sync"

+ "time"

+)

+

+// The algorithm can be seen running in real-time by enabling debugging:

+// go test -tags=cmp_debug -v

+//

+// Example output:

+// === RUN TestDifference/#34

+// ┌───────────────────────────────┐

+// │ \ · · · · · · · · · · · · · · │

+// │ · # · · · · · · · · · · · · · │

+// │ · \ · · · · · · · · · · · · · │

+// │ · · \ · · · · · · · · · · · · │

+// │ · · · X # · · · · · · · · · · │

+// │ · · · # \ · · · · · · · · · · │

+// │ · · · · · # # · · · · · · · · │

+// │ · · · · · # \ · · · · · · · · │

+// │ · · · · · · · \ · · · · · · · │

+// │ · · · · · · · · \ · · · · · · │

+// │ · · · · · · · · · \ · · · · · │

+// │ · · · · · · · · · · \ · · # · │

+// │ · · · · · · · · · · · \ # # · │

+// │ · · · · · · · · · · · # # # · │

+// │ · · · · · · · · · · # # # # · │

+// │ · · · · · · · · · # # # # # · │

+// │ · · · · · · · · · · · · · · \ │

+// └───────────────────────────────┘

+// [.Y..M.XY......YXYXY.|]

+//

+// The grid represents the edit-graph where the horizontal axis represents

+// list X and the vertical axis represents list Y. The start of the two lists

+// is the top-left, while the ends are the bottom-right. The '·' represents

+// an unexplored node in the graph. The '\' indicates that the two symbols

+// from list X and Y are equal. The 'X' indicates that two symbols are similar

+// (but not exactly equal) to each other. The '#' indicates that the two symbols

+// are different (and not similar). The algorithm traverses this graph trying to

+// make the paths starting in the top-left and the bottom-right connect.

+//

+// The series of '.', 'X', 'Y', and 'M' characters at the bottom represents

+// the currently established path from the forward and reverse searches,

+// separated by a '|' character.

+

+const (

+ updateDelay = 100 * time.Millisecond

+ finishDelay = 500 * time.Millisecond

+ ansiTerminal = true // ANSI escape codes used to move terminal cursor

+)

+

+var debug debugger

+

+type debugger struct {

+ sync.Mutex

+ p1, p2 EditScript

+ fwdPath, revPath *EditScript

+ grid []byte

+ lines int

+}

+

+func (dbg *debugger) Begin(nx, ny int, f EqualFunc, p1, p2 *EditScript) EqualFunc {

+ dbg.Lock()

+ dbg.fwdPath, dbg.revPath = p1, p2

+ top := "┌─" + strings.Repeat("──", nx) + "┐\n"

+ row := "│ " + strings.Repeat("· ", nx) + "│\n"

+ btm := "└─" + strings.Repeat("──", nx) + "┘\n"

+ dbg.grid = []byte(top + strings.Repeat(row, ny) + btm)

+ dbg.lines = strings.Count(dbg.String(), "\n")

+ fmt.Print(dbg)

+

+ // Wrap the EqualFunc so that we can intercept each result.

+ return func(ix, iy int) (r Result) {

+ cell := dbg.grid[len(top)+iy*len(row):][len("│ ")+len("· ")*ix:][:len("·")]

+ for i := range cell {

+ cell[i] = 0 // Zero out the multiple bytes of UTF-8 middle-dot

+ }

+ switch r = f(ix, iy); {

+ case r.Equal():

+ cell[0] = '\\'

+ case r.Similar():

+ cell[0] = 'X'

+ default:

+ cell[0] = '#'

+ }

+ return

+ }

+}

+

+func (dbg *debugger) Update() {

+ dbg.print(updateDelay)

+}

+

+func (dbg *debugger) Finish() {

+ dbg.print(finishDelay)

+ dbg.Unlock()

+}

+

+func (dbg *debugger) String() string {

+ dbg.p1, dbg.p2 = *dbg.fwdPath, dbg.p2[:0]

+ for i := len(*dbg.revPath) - 1; i >= 0; i-- {

+ dbg.p2 = append(dbg.p2, (*dbg.revPath)[i])

+ }

+ return fmt.Sprintf("%s[%v|%v]\n\n", dbg.grid, dbg.p1, dbg.p2)

+}

+

+func (dbg *debugger) print(d time.Duration) {

+ if ansiTerminal {

+ fmt.Printf("\x1b[%dA", dbg.lines) // Reset terminal cursor

+ }

+ fmt.Print(dbg)

+ time.Sleep(d)

+}

diff --git a/vendor/github.com/google/go-cmp/cmp/internal/diff/diff.go b/vendor/github.com/google/go-cmp/cmp/internal/diff/diff.go

new file mode 100644

index 0000000..a248e54

--- /dev/null

+++ b/vendor/github.com/google/go-cmp/cmp/internal/diff/diff.go

@@ -0,0 +1,402 @@

+// Copyright 2017, The Go Authors. All rights reserved.

+// Use of this source code is governed by a BSD-style

+// license that can be found in the LICENSE file.

+

+// Package diff implements an algorithm for producing edit-scripts.

+// The edit-script is a sequence of operations needed to transform one list

+// of symbols into another (or vice-versa). The edits allowed are insertions,

+// deletions, and modifications. The summation of all edits is called the

+// Levenshtein distance as this problem is well-known in computer science.

+//

+// This package prioritizes performance over accuracy. That is, the run time

+// is more important than obtaining a minimal Levenshtein distance.

+package diff

+

+import (

+ "math/rand"

+ "time"

+

+ "github.com/google/go-cmp/cmp/internal/flags"

+)

+

+// EditType represents a single operation within an edit-script.

+type EditType uint8

+

+const (

+ // Identity indicates that a symbol pair is identical in both list X and Y.

+ Identity EditType = iota

+ // UniqueX indicates that a symbol only exists in X and not Y.

+ UniqueX

+ // UniqueY indicates that a symbol only exists in Y and not X.

+ UniqueY

+ // Modified indicates that a symbol pair is a modification of each other.

+ Modified

+)

+

+// EditScript represents the series of differences between two lists.

+type EditScript []EditType

+

+// String returns a human-readable string representing the edit-script where

+// Identity, UniqueX, UniqueY, and Modified are represented by the

+// '.', 'X', 'Y', and 'M' characters, respectively.

+func (es EditScript) String() string {

+ b := make([]byte, len(es))

+ for i, e := range es {

+ switch e {

+ case Identity:

+ b[i] = '.'

+ case UniqueX:

+ b[i] = 'X'

+ case UniqueY:

+ b[i] = 'Y'

+ case Modified:

+ b[i] = 'M'

+ default:

+ panic("invalid edit-type")

+ }

+ }

+ return string(b)

+}

+

+// stats returns a histogram of the number of each type of edit operation.

+func (es EditScript) stats() (s struct{ NI, NX, NY, NM int }) {

+ for _, e := range es {

+ switch e {

+ case Identity:

+ s.NI++

+ case UniqueX:

+ s.NX++

+ case UniqueY:

+ s.NY++

+ case Modified:

+ s.NM++

+ default:

+ panic("invalid edit-type")

+ }

+ }

+ return

+}

+

+// Dist is the Levenshtein distance and is guaranteed to be 0 if and only if

+// lists X and Y are equal.

+func (es EditScript) Dist() int { return len(es) - es.stats().NI }

+

+// LenX is the length of the X list.

+func (es EditScript) LenX() int { return len(es) - es.stats().NY }

+

+// LenY is the length of the Y list.

+func (es EditScript) LenY() int { return len(es) - es.stats().NX }

+

+// EqualFunc reports whether the symbols at indexes ix and iy are equal.

+// When called by Difference, the index is guaranteed to be within nx and ny.

+type EqualFunc func(ix int, iy int) Result

+

+// Result is the result of comparison.

+// NumSame is the number of sub-elements that are equal.

+// NumDiff is the number of sub-elements that are not equal.

+type Result struct{ NumSame, NumDiff int }

+

+// BoolResult returns a Result that is either Equal or not Equal.

+func BoolResult(b bool) Result {

+ if b {

+ return Result{NumSame: 1} // Equal, Similar

+ } else {

+ return Result{NumDiff: 2} // Not Equal, not Similar

+ }

+}

+

+// Equal indicates whether the symbols are equal. Two symbols are equal

+// if and only if NumDiff == 0. If Equal, then they are also Similar.

+func (r Result) Equal() bool { return r.NumDiff == 0 }

+

+// Similar indicates whether two symbols are similar and may be represented

+// by using the Modified type. As a special case, we consider binary comparisons

+// (i.e., those that return Result{1, 0} or Result{0, 1}) to be similar.

+//

+// The exact ratio of NumSame to NumDiff to determine similarity may change.

+func (r Result) Similar() bool {

+ // Use NumSame+1 to offset NumSame so that binary comparisons are similar.

+ return r.NumSame+1 >= r.NumDiff

+}

+

+var randBool = rand.New(rand.NewSource(time.Now().Unix())).Intn(2) == 0

+

+// Difference reports whether two lists of lengths nx and ny are equal

+// given the definition of equality provided as f.

+//

+// This function returns an edit-script, which is a sequence of operations

+// needed to convert one list into the other. The following invariants for

+// the edit-script are maintained:

+// - eq == (es.Dist()==0)

+// - nx == es.LenX()

+// - ny == es.LenY()

+//

+// This algorithm is not guaranteed to be an optimal solution (i.e., one that

+// produces an edit-script with a minimal Levenshtein distance). This algorithm

+// favors performance over optimality. The exact output is not guaranteed to

+// be stable and may change over time.

+func Difference(nx, ny int, f EqualFunc) (es EditScript) {

+ // This algorithm is based on traversing what is known as an "edit-graph".

+ // See Figure 1 from "An O(ND) Difference Algorithm and Its Variations"

+ // by Eugene W. Myers. Since D can be as large as N itself, this is

+ // effectively O(N^2). Unlike the algorithm from that paper, we are not

+ // interested in the optimal path, but at least some "decent" path.

+ //

+ // For example, let X and Y be lists of symbols:

+ // X = [A B C A B B A]

+ // Y = [C B A B A C]

+ //

+ // The edit-graph can be drawn as the following:

+ // A B C A B B A

+ // ┌─────────────┐

+ // C │_|_|\|_|_|_|_│ 0

+ // B │_|\|_|_|\|\|_│ 1

+ // A │\|_|_|\|_|_|\│ 2

+ // B │_|\|_|_|\|\|_│ 3

+ // A │\|_|_|\|_|_|\│ 4

+ // C │ | |\| | | | │ 5

+ // └─────────────┘ 6

+ // 0 1 2 3 4 5 6 7

+ //

+ // List X is written along the horizontal axis, while list Y is written

+ // along the vertical axis. At any point on this grid, if the symbol in

+ // list X matches the corresponding symbol in list Y, then a '\' is drawn.

+ // The goal of any minimal edit-script algorithm is to find a path from the

+ // top-left corner to the bottom-right corner, while traveling through the

+ // fewest horizontal or vertical edges.

+ // A horizontal edge is equivalent to inserting a symbol from list X.

+ // A vertical edge is equivalent to inserting a symbol from list Y.

+ // A diagonal edge is equivalent to a matching symbol between both X and Y.

+

+ // Invariants:

+ // - 0 ≤ fwdPath.X ≤ (fwdFrontier.X, revFrontier.X) ≤ revPath.X ≤ nx

+ // - 0 ≤ fwdPath.Y ≤ (fwdFrontier.Y, revFrontier.Y) ≤ revPath.Y ≤ ny

+ //

+ // In general:

+ // - fwdFrontier.X < revFrontier.X

+ // - fwdFrontier.Y < revFrontier.Y

+ //

+ // Unless, it is time for the algorithm to terminate.

+ fwdPath := path{+1, point{0, 0}, make(EditScript, 0, (nx+ny)/2)}

+ revPath := path{-1, point{nx, ny}, make(EditScript, 0)}

+ fwdFrontier := fwdPath.point // Forward search frontier

+ revFrontier := revPath.point // Reverse search frontier

+

+ // Search budget bounds the cost of searching for better paths.

+ // The longest sequence of non-matching symbols that can be tolerated is

+ // approximately the square-root of the search budget.

+ searchBudget := 4 * (nx + ny) // O(n)

+

+ // Running the tests with the "cmp_debug" build tag prints a visualization

+ // of the algorithm running in real-time. This is educational for

+ // understanding how the algorithm works. See debug_enable.go.

+ f = debug.Begin(nx, ny, f, &fwdPath.es, &revPath.es)

+

+ // The algorithm below is a greedy, meet-in-the-middle algorithm for

+ // computing sub-optimal edit-scripts between two lists.

+ //

+ // The algorithm is approximately as follows:

+ // - Searching for differences switches back-and-forth between

+ // a search that starts at the beginning (the top-left corner), and

+ // a search that starts at the end (the bottom-right corner).

+ // The goal of the search is connect with the search

+ // from the opposite corner.

+ // - As we search, we build a path in a greedy manner,

+ // where the first match seen is added to the path (this is sub-optimal,

+ // but provides a decent result in practice). When matches are found,

+ // we try the next pair of symbols in the lists and follow all matches

+ // as far as possible.

+ // - When searching for matches, we search along a diagonal going through

+ // through the "frontier" point. If no matches are found,

+ // we advance the frontier towards the opposite corner.

+ // - This algorithm terminates when either the X coordinates or the

+ // Y coordinates of the forward and reverse frontier points ever intersect.

+

+ // This algorithm is correct even if searching only in the forward direction

+ // or in the reverse direction. We do both because it is commonly observed

+ // that two lists commonly differ because elements were added to the front

+ // or end of the other list.

+ //

+ // Non-deterministically start with either the forward or reverse direction

+ // to introduce some deliberate instability so that we have the flexibility

+ // to change this algorithm in the future.

+ if flags.Deterministic || randBool {

+ goto forwardSearch

+ } else {

+ goto reverseSearch

+ }

+

+forwardSearch:

+ {

+ // Forward search from the beginning.

+ if fwdFrontier.X >= revFrontier.X || fwdFrontier.Y >= revFrontier.Y || searchBudget == 0 {

+ goto finishSearch

+ }

+ for stop1, stop2, i := false, false, 0; !(stop1 && stop2) && searchBudget > 0; i++ {

+ // Search in a diagonal pattern for a match.

+ z := zigzag(i)

+ p := point{fwdFrontier.X + z, fwdFrontier.Y - z}

+ switch {

+ case p.X >= revPath.X || p.Y < fwdPath.Y:

+ stop1 = true // Hit top-right corner

+ case p.Y >= revPath.Y || p.X < fwdPath.X:

+ stop2 = true // Hit bottom-left corner

+ case f(p.X, p.Y).Equal():

+ // Match found, so connect the path to this point.

+ fwdPath.connect(p, f)

+ fwdPath.append(Identity)

+ // Follow sequence of matches as far as possible.

+ for fwdPath.X < revPath.X && fwdPath.Y < revPath.Y {

+ if !f(fwdPath.X, fwdPath.Y).Equal() {

+ break

+ }

+ fwdPath.append(Identity)

+ }

+ fwdFrontier = fwdPath.point

+ stop1, stop2 = true, true

+ default:

+ searchBudget-- // Match not found

+ }

+ debug.Update()

+ }

+ // Advance the frontier towards reverse point.

+ if revPath.X-fwdFrontier.X >= revPath.Y-fwdFrontier.Y {

+ fwdFrontier.X++

+ } else {

+ fwdFrontier.Y++

+ }

+ goto reverseSearch

+ }

+

+reverseSearch:

+ {

+ // Reverse search from the end.

+ if fwdFrontier.X >= revFrontier.X || fwdFrontier.Y >= revFrontier.Y || searchBudget == 0 {

+ goto finishSearch

+ }

+ for stop1, stop2, i := false, false, 0; !(stop1 && stop2) && searchBudget > 0; i++ {

+ // Search in a diagonal pattern for a match.

+ z := zigzag(i)

+ p := point{revFrontier.X - z, revFrontier.Y + z}

+ switch {

+ case fwdPath.X >= p.X || revPath.Y < p.Y:

+ stop1 = true // Hit bottom-left corner

+ case fwdPath.Y >= p.Y || revPath.X < p.X:

+ stop2 = true // Hit top-right corner

+ case f(p.X-1, p.Y-1).Equal():

+ // Match found, so connect the path to this point.

+ revPath.connect(p, f)

+ revPath.append(Identity)

+ // Follow sequence of matches as far as possible.

+ for fwdPath.X < revPath.X && fwdPath.Y < revPath.Y {

+ if !f(revPath.X-1, revPath.Y-1).Equal() {

+ break

+ }

+ revPath.append(Identity)

+ }

+ revFrontier = revPath.point

+ stop1, stop2 = true, true

+ default:

+ searchBudget-- // Match not found

+ }

+ debug.Update()

+ }

+ // Advance the frontier towards forward point.

+ if revFrontier.X-fwdPath.X >= revFrontier.Y-fwdPath.Y {

+ revFrontier.X--

+ } else {

+ revFrontier.Y--

+ }

+ goto forwardSearch

+ }

+

+finishSearch:

+ // Join the forward and reverse paths and then append the reverse path.

+ fwdPath.connect(revPath.point, f)

+ for i := len(revPath.es) - 1; i >= 0; i-- {

+ t := revPath.es[i]

+ revPath.es = revPath.es[:i]

+ fwdPath.append(t)

+ }

+ debug.Finish()

+ return fwdPath.es

+}

+

+type path struct {

+ dir int // +1 if forward, -1 if reverse

+ point // Leading point of the EditScript path

+ es EditScript

+}

+

+// connect appends any necessary Identity, Modified, UniqueX, or UniqueY types

+// to the edit-script to connect p.point to dst.

+func (p *path) connect(dst point, f EqualFunc) {

+ if p.dir > 0 {

+ // Connect in forward direction.

+ for dst.X > p.X && dst.Y > p.Y {

+ switch r := f(p.X, p.Y); {

+ case r.Equal():

+ p.append(Identity)

+ case r.Similar():

+ p.append(Modified)

+ case dst.X-p.X >= dst.Y-p.Y:

+ p.append(UniqueX)

+ default:

+ p.append(UniqueY)

+ }

+ }

+ for dst.X > p.X {

+ p.append(UniqueX)

+ }

+ for dst.Y > p.Y {

+ p.append(UniqueY)

+ }

+ } else {

+ // Connect in reverse direction.

+ for p.X > dst.X && p.Y > dst.Y {

+ switch r := f(p.X-1, p.Y-1); {

+ case r.Equal():

+ p.append(Identity)

+ case r.Similar():

+ p.append(Modified)

+ case p.Y-dst.Y >= p.X-dst.X:

+ p.append(UniqueY)

+ default:

+ p.append(UniqueX)

+ }

+ }

+ for p.X > dst.X {

+ p.append(UniqueX)

+ }

+ for p.Y > dst.Y {

+ p.append(UniqueY)

+ }

+ }

+}

+

+func (p *path) append(t EditType) {

+ p.es = append(p.es, t)

+ switch t {

+ case Identity, Modified:

+ p.add(p.dir, p.dir)

+ case UniqueX:

+ p.add(p.dir, 0)

+ case UniqueY:

+ p.add(0, p.dir)

+ }

+ debug.Update()

+}

+

+type point struct{ X, Y int }

+

+func (p *point) add(dx, dy int) { p.X += dx; p.Y += dy }

+

+// zigzag maps a consecutive sequence of integers to a zig-zag sequence.

+//

+// [0 1 2 3 4 5 ...] => [0 -1 +1 -2 +2 ...]

+func zigzag(x int) int {

+ if x&1 != 0 {

+ x = ^x

+ }

+ return x >> 1

+}

diff --git a/vendor/github.com/google/go-cmp/cmp/internal/flags/flags.go b/vendor/github.com/google/go-cmp/cmp/internal/flags/flags.go

new file mode 100644

index 0000000..d8e459c

--- /dev/null

+++ b/vendor/github.com/google/go-cmp/cmp/internal/flags/flags.go

@@ -0,0 +1,9 @@

+// Copyright 2019, The Go Authors. All rights reserved.

+// Use of this source code is governed by a BSD-style

+// license that can be found in the LICENSE file.

+

+package flags

+

+// Deterministic controls whether the output of Diff should be deterministic.

+// This is only used for testing.

+var Deterministic bool

diff --git a/vendor/github.com/google/go-cmp/cmp/internal/function/func.go b/vendor/github.com/google/go-cmp/cmp/internal/function/func.go

new file mode 100644

index 0000000..d127d43

--- /dev/null

+++ b/vendor/github.com/google/go-cmp/cmp/internal/function/func.go

@@ -0,0 +1,99 @@

+// Copyright 2017, The Go Authors. All rights reserved.

+// Use of this source code is governed by a BSD-style

+// license that can be found in the LICENSE file.

+

+// Package function provides functionality for identifying function types.

+package function

+

+import (

+ "reflect"

+ "regexp"

+ "runtime"

+ "strings"

+)

+

+type funcType int

+

+const (

+ _ funcType = iota

+

+ tbFunc // func(T) bool

+ ttbFunc // func(T, T) bool

+ trbFunc // func(T, R) bool

+ tibFunc // func(T, I) bool

+ trFunc // func(T) R

+

+ Equal = ttbFunc // func(T, T) bool

+ EqualAssignable = tibFunc // func(T, I) bool; encapsulates func(T, T) bool

+ Transformer = trFunc // func(T) R

+ ValueFilter = ttbFunc // func(T, T) bool

+ Less = ttbFunc // func(T, T) bool

+ ValuePredicate = tbFunc // func(T) bool

+ KeyValuePredicate = trbFunc // func(T, R) bool

+)

+

+var boolType = reflect.TypeOf(true)

+

+// IsType reports whether the reflect.Type is of the specified function type.

+func IsType(t reflect.Type, ft funcType) bool {

+ if t == nil || t.Kind() != reflect.Func || t.IsVariadic() {

+ return false

+ }

+ ni, no := t.NumIn(), t.NumOut()

+ switch ft {

+ case tbFunc: // func(T) bool

+ if ni == 1 && no == 1 && t.Out(0) == boolType {

+ return true

+ }

+ case ttbFunc: // func(T, T) bool

+ if ni == 2 && no == 1 && t.In(0) == t.In(1) && t.Out(0) == boolType {

+ return true

+ }

+ case trbFunc: // func(T, R) bool

+ if ni == 2 && no == 1 && t.Out(0) == boolType {

+ return true

+ }

+ case tibFunc: // func(T, I) bool

+ if ni == 2 && no == 1 && t.In(0).AssignableTo(t.In(1)) && t.Out(0) == boolType {

+ return true

+ }

+ case trFunc: // func(T) R

+ if ni == 1 && no == 1 {

+ return true

+ }

+ }

+ return false

+}

+

+var lastIdentRx = regexp.MustCompile(`[_\p{L}][_\p{L}\p{N}]*$`)

+

+// NameOf returns the name of the function value.

+func NameOf(v reflect.Value) string {

+ fnc := runtime.FuncForPC(v.Pointer())

+ if fnc == nil {

+ return ""

+ }

+ fullName := fnc.Name() // e.g., "long/path/name/mypkg.(*MyType).(long/path/name/mypkg.myMethod)-fm"

+

+ // Method closures have a "-fm" suffix.

+ fullName = strings.TrimSuffix(fullName, "-fm")

+

+ var name string

+ for len(fullName) > 0 {

+ inParen := strings.HasSuffix(fullName, ")")

+ fullName = strings.TrimSuffix(fullName, ")")

+

+ s := lastIdentRx.FindString(fullName)

+ if s == "" {

+ break

+ }

+ name = s + "." + name

+ fullName = strings.TrimSuffix(fullName, s)

+

+ if i := strings.LastIndexByte(fullName, '('); inParen && i >= 0 {

+ fullName = fullName[:i]

+ }

+ fullName = strings.TrimSuffix(fullName, ".")

+ }

+ return strings.TrimSuffix(name, ".")

+}

diff --git a/vendor/github.com/google/go-cmp/cmp/internal/value/name.go b/vendor/github.com/google/go-cmp/cmp/internal/value/name.go

new file mode 100644

index 0000000..7b498bb

--- /dev/null

+++ b/vendor/github.com/google/go-cmp/cmp/internal/value/name.go

@@ -0,0 +1,164 @@

+// Copyright 2020, The Go Authors. All rights reserved.

+// Use of this source code is governed by a BSD-style

+// license that can be found in the LICENSE file.

+

+package value

+

+import (

+ "reflect"

+ "strconv"

+)

+

+var anyType = reflect.TypeOf((*interface{})(nil)).Elem()

+

+// TypeString is nearly identical to reflect.Type.String,

+// but has an additional option to specify that full type names be used.

+func TypeString(t reflect.Type, qualified bool) string {

+ return string(appendTypeName(nil, t, qualified, false))

+}

+

+func appendTypeName(b []byte, t reflect.Type, qualified, elideFunc bool) []byte {

+ // BUG: Go reflection provides no way to disambiguate two named types

+ // of the same name and within the same package,

+ // but declared within the namespace of different functions.

+

+ // Use the "any" alias instead of "interface{}" for better readability.

+ if t == anyType {

+ return append(b, "any"...)

+ }

+

+ // Named type.

+ if t.Name() != "" {

+ if qualified && t.PkgPath() != "" {

+ b = append(b, '"')

+ b = append(b, t.PkgPath()...)

+ b = append(b, '"')

+ b = append(b, '.')

+ b = append(b, t.Name()...)

+ } else {

+ b = append(b, t.String()...)

+ }

+ return b

+ }

+

+ // Unnamed type.

+ switch k := t.Kind(); k {

+ case reflect.Bool, reflect.String, reflect.UnsafePointer,

+ reflect.Int, reflect.Int8, reflect.Int16, reflect.Int32, reflect.Int64,

+ reflect.Uint, reflect.Uint8, reflect.Uint16, reflect.Uint32, reflect.Uint64, reflect.Uintptr,

+ reflect.Float32, reflect.Float64, reflect.Complex64, reflect.Complex128:

+ b = append(b, k.String()...)

+ case reflect.Chan:

+ if t.ChanDir() == reflect.RecvDir {

+ b = append(b, "<-"...)

+ }

+ b = append(b, "chan"...)

+ if t.ChanDir() == reflect.SendDir {

+ b = append(b, "<-"...)

+ }

+ b = append(b, ' ')

+ b = appendTypeName(b, t.Elem(), qualified, false)

+ case reflect.Func:

+ if !elideFunc {

+ b = append(b, "func"...)

+ }

+ b = append(b, '(')

+ for i := 0; i < t.NumIn(); i++ {

+ if i > 0 {

+ b = append(b, ", "...)

+ }

+ if i == t.NumIn()-1 && t.IsVariadic() {

+ b = append(b, "..."...)

+ b = appendTypeName(b, t.In(i).Elem(), qualified, false)

+ } else {

+ b = appendTypeName(b, t.In(i), qualified, false)

+ }

+ }

+ b = append(b, ')')

+ switch t.NumOut() {

+ case 0:

+ // Do nothing

+ case 1:

+ b = append(b, ' ')

+ b = appendTypeName(b, t.Out(0), qualified, false)

+ default:

+ b = append(b, " ("...)

+ for i := 0; i < t.NumOut(); i++ {

+ if i > 0 {

+ b = append(b, ", "...)

+ }

+ b = appendTypeName(b, t.Out(i), qualified, false)

+ }

+ b = append(b, ')')

+ }

+ case reflect.Struct:

+ b = append(b, "struct{ "...)

+ for i := 0; i < t.NumField(); i++ {

+ if i > 0 {

+ b = append(b, "; "...)

+ }

+ sf := t.Field(i)

+ if !sf.Anonymous {

+ if qualified && sf.PkgPath != "" {

+ b = append(b, '"')

+ b = append(b, sf.PkgPath...)

+ b = append(b, '"')

+ b = append(b, '.')

+ }

+ b = append(b, sf.Name...)

+ b = append(b, ' ')

+ }

+ b = appendTypeName(b, sf.Type, qualified, false)

+ if sf.Tag != "" {

+ b = append(b, ' ')

+ b = strconv.AppendQuote(b, string(sf.Tag))

+ }

+ }

+ if b[len(b)-1] == ' ' {

+ b = b[:len(b)-1]

+ } else {

+ b = append(b, ' ')

+ }

+ b = append(b, '}')

+ case reflect.Slice, reflect.Array:

+ b = append(b, '[')

+ if k == reflect.Array {

+ b = strconv.AppendUint(b, uint64(t.Len()), 10)

+ }

+ b = append(b, ']')

+ b = appendTypeName(b, t.Elem(), qualified, false)

+ case reflect.Map:

+ b = append(b, "map["...)

+ b = appendTypeName(b, t.Key(), qualified, false)

+ b = append(b, ']')

+ b = appendTypeName(b, t.Elem(), qualified, false)

+ case reflect.Ptr:

+ b = append(b, '*')

+ b = appendTypeName(b, t.Elem(), qualified, false)

+ case reflect.Interface:

+ b = append(b, "interface{ "...)

+ for i := 0; i < t.NumMethod(); i++ {

+ if i > 0 {

+ b = append(b, "; "...)

+ }

+ m := t.Method(i)

+ if qualified && m.PkgPath != "" {

+ b = append(b, '"')

+ b = append(b, m.PkgPath...)

+ b = append(b, '"')

+ b = append(b, '.')

+ }

+ b = append(b, m.Name...)

+ b = appendTypeName(b, m.Type, qualified, true)

+ }

+ if b[len(b)-1] == ' ' {

+ b = b[:len(b)-1]

+ } else {

+ b = append(b, ' ')

+ }

+ b = append(b, '}')

+ default:

+ panic("invalid kind: " + k.String())

+ }

+ return b

+}